[Mock]Design a URL Shortening service like goo.gl or bit.ly

A Mock interview of a System design interview on designing a goo.gl or bit.ly like systems at Hooli

👋 Hi, this is Venkat, and here with a full issue of the ZenMode Engineer Newsletter. In every issue, I cover one topic explained in simpler terms in areas related to computer systems and tech and beyond.

Interviewer: Thanks for coming in today at Hooli. Can you start by giving us a brief overview of what a URL shortening service is and how it works?

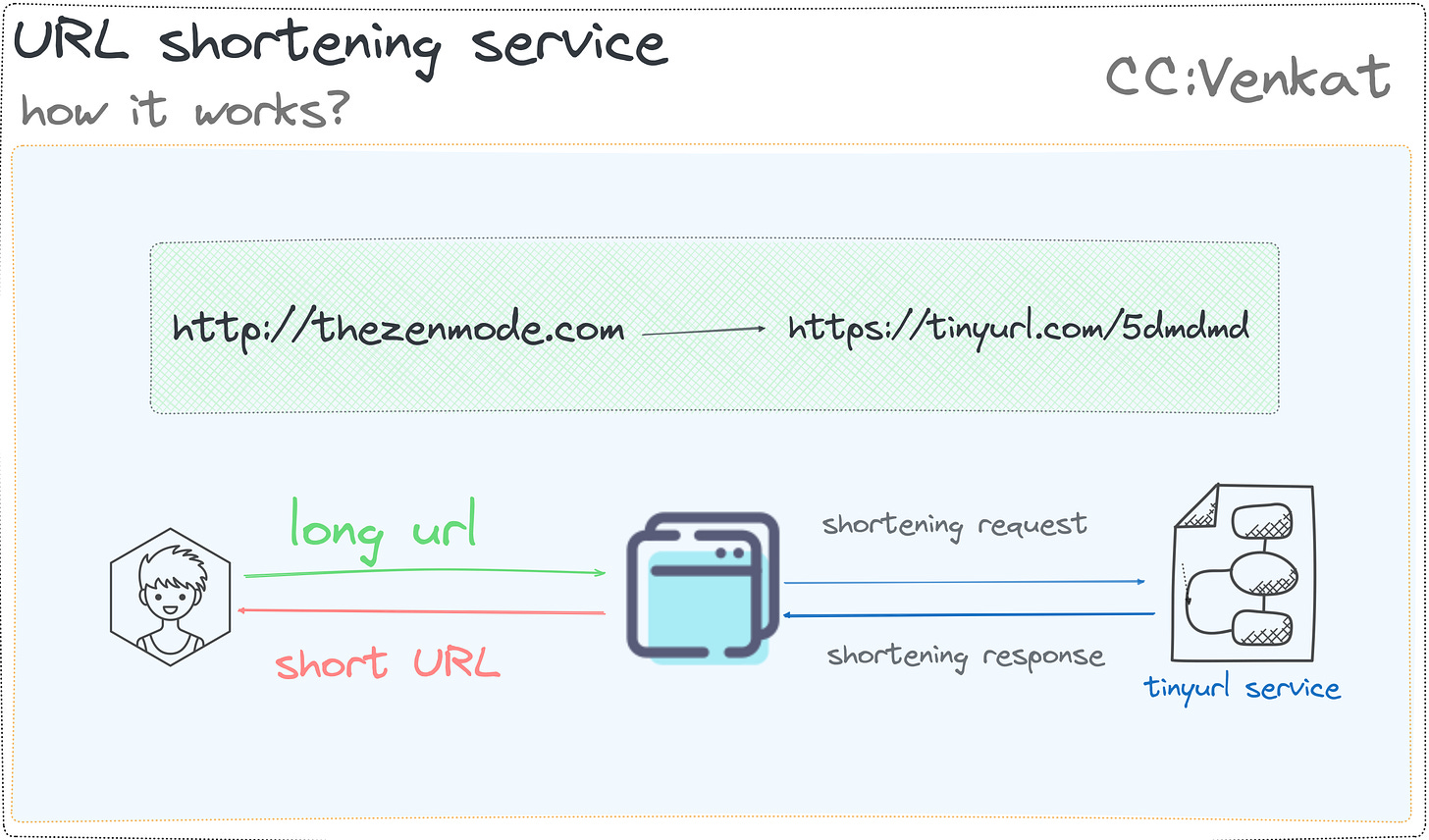

Interviewee: Sure! A URL shortening service is a service that takes long URLs and converts them into short, unique URLs that are easier to share and remember.

When a user accesses the short URL, the service redirects them to the original, long URL.

The mapping between the short URL and the long URL is stored in a database and is used to perform the redirect.

Interviewer: That's great. Can you walk us through the steps involved in shortening a URL and accessing the original URL using the short URL?

Interviewee: Sure! Here are the steps involved:

The user inputs the long URL into the URL shortening service.

The service generates a unique, short URL and stores the mapping between the short URL and the long URL in a database.

The user is given a short URL, which they can share or use as they please.

When a user accesses the short URL, the service looks up the mapping in the database and redirects the user to the original, long URL.

Interviewer: Great! Today, I'd like to discuss with you the design of a URL-shortening service like Goo. gl or bit.ly. Can you walk us through your thought process and the steps you would take to design such a system?

Interviewee: Sure! I'd be happy to discuss my approach to designing a URL-shortening service. To start, I'd like to better understand the system's requirements and constraints.

To design a system correctly, we need to ask the right questions, such as:

What are the use cases of the system?

What is the amount of Daily Active Users (DAU) for writes?

How many years should we persist the short URL by default?

What is the anticipated read: write ratio of the system?

What is the usage pattern of the shortened URL?

Who will use the URL shortener service?

What is the reasonable length of a short URL?

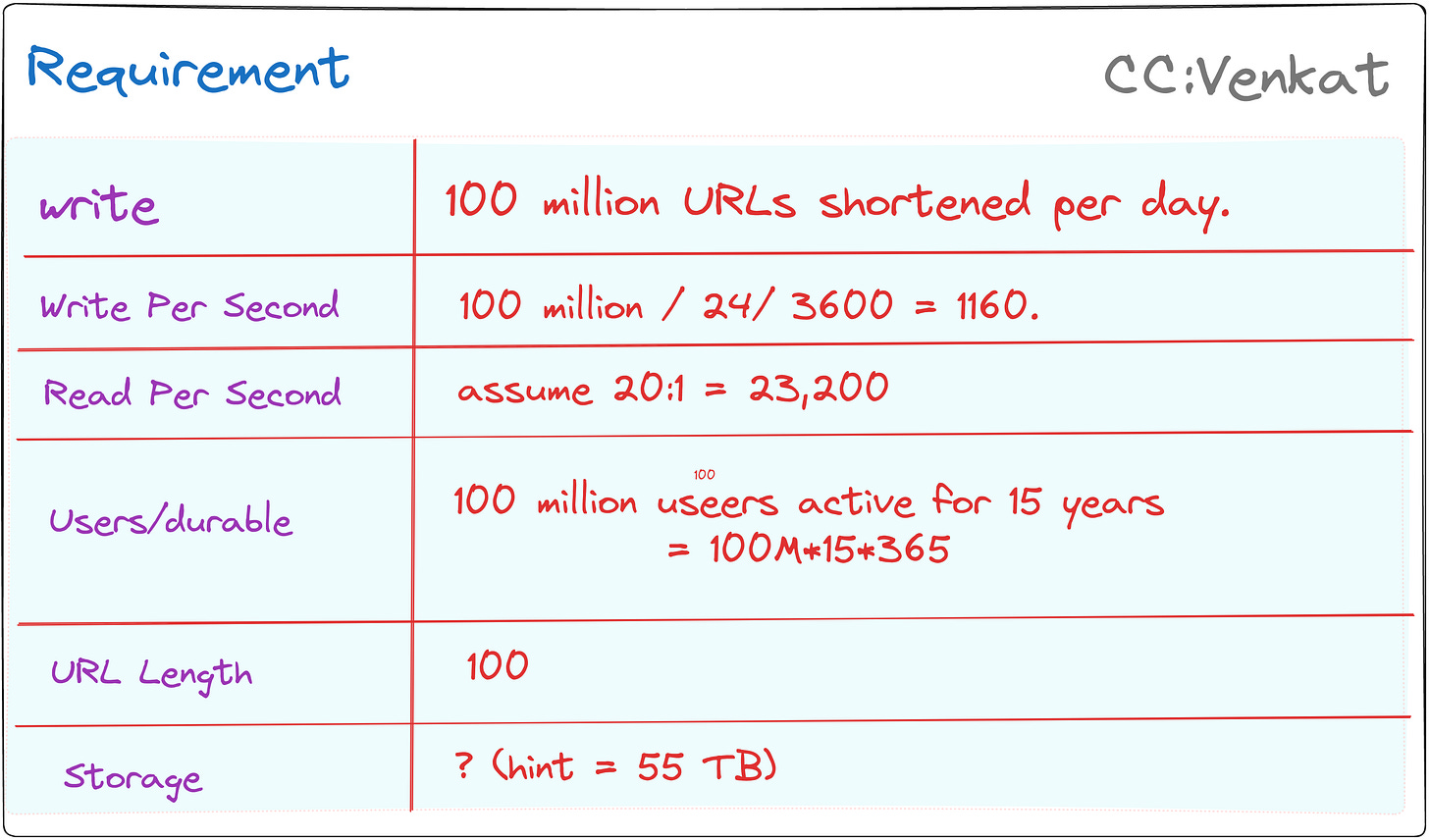

Interviewer: Sure, let's assume that we need to design a highly scalable and available URL-shortening service that can handle millions of requests

Requirements

You should design a system that can handle up to millions of URLs generated per day. The length of the generated URLs should be as short as possible. They can be a combination of characters (a-z, A-Z) as well as numbers (0–9).

Use case

User input a long URL, the service returns a much shorter URL

User clicks the shortened URL, they are redirected to the original URL

The service should be highly available, scalable, and fault-tolerant.

Functional Requirements:

Given a URL, our service should generate a shorter and unique alias for it. This is called a short link. This link should be short enough to be easily copied and pasted into applications.

When users access a short link, our service should redirect them to the original link.

Users should optionally be able to pick a custom short link for their URL.

Links will expire after a standard default timespan. Users should be able to specify the expiration time.

Non-Functional Requirements:

The system should be highly available. This is required because, if our service is down, all the URL redirections will start failing.

URL redirection should happen in real time with minimal latency.

Shortened links should not be guessable (not predictable).

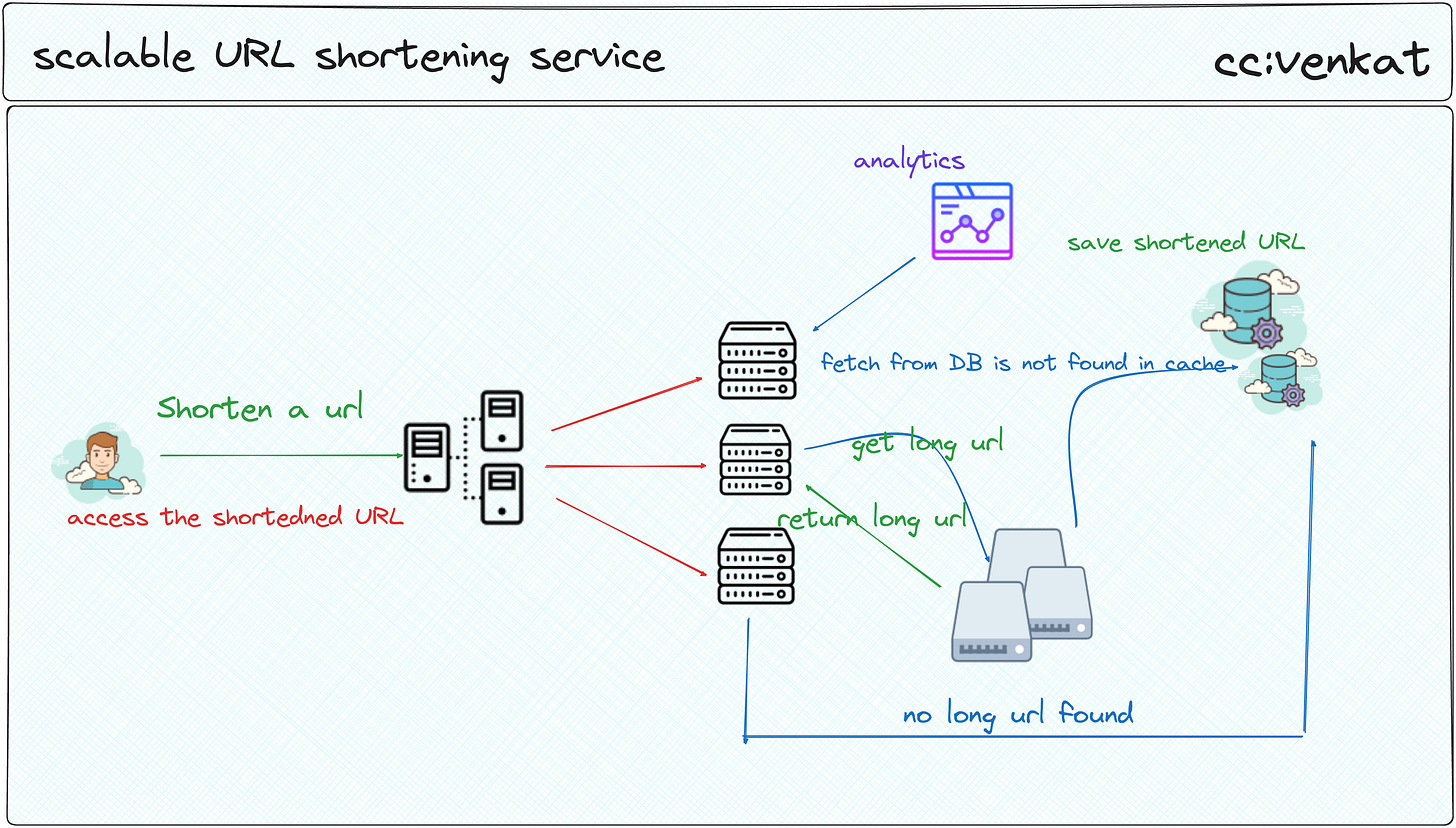

Interviewee: Okay, got it. To handle such high traffic, I would use a distributed system approach. The system would consist of multiple servers, load balancers, and databases that work together to provide the service.

Interviewer: Can you tell us more about how the servers would work together?

Interviewee: Sure. The load balancers would be responsible for distributing incoming requests to the servers. Each server would shorten the URL and store the mapping in its local database. The databases on each server would be kept in sync through replication so that the mapping is consistent across all servers.

Interviewer: Can you walk us through the complete systems of building at scale?

Interviewee: Sure! When a user requests a shortened URL, the load balancer would direct the request to one of the servers.

The server would then generate a unique identifier for the URL and store the mapping in its database.

The server would then return the shortened URL, which consists of the unique identifier and the base URL of the service (e.g. bit. ly/[unique_identifier]).

Low-level design

The user will click the shortened URL link.

The load balancer will send the request to the web servers.

If the shortened URL is already in the cache, return the long URL immediately.

Bad cases will happen if the shortened URL is not in the cache, the service will have to go find it in the database. The worst-case scenario is the user enters an invalid URL.

The long URL will be returned to the user.

Interviewer: That sounds great! I see you stated about having a load balancer. Can you talk about the load balancer?

Interviewee:

We can add a Load-balancing layer at three places in our system:

Between Clients and Application servers

Between Application Servers and database servers

Between Application Servers and Cache servers

Initially, we could use a simple Round Robin approach that distributes incoming requests equally among backend servers. This LB is simple to implement and does not introduce any overhead. Another benefit of this approach is that if a server is dead, LB will take it out of the rotation and stop sending any traffic to it.

A problem with Round Robin LB is that we do not consider the server load. As a result, if a server is overloaded or slow, the LB will not stop sending new requests to that server. To handle this, a more intelligent LB solution can be placed that periodically queries the backend server about its load and adjusts traffic based on that.

Interviewer: What about redirecting the user from the shortened URL to the original URL?

Interviewee: When a user clicks on a shortened URL, the request would be redirected to one of the servers, which would then look up the original URL in its database based on the unique identifier. The server would then redirect the user to the original URL.

Interviewer: How would you handle conflicts when two users try to shorten the same URL at the same time?

Interviewee: To handle this scenario, I would use a distributed locking mechanism to ensure that only one server can shorten a URL at a time.

The locking mechanism would work by having a lock server that all the other servers would check with before shortening a URL.

If the lock server returns that the URL is locked, the server will wait until the lock is released before proceeding.

Interviewer: But then … How would you generate a short and unique key for a given URL?

Sure, there are multiple ways I can think of…

🗒️Random String Generation: One simple approach is to generate a random string of characters that serves as the key. This can be achieved using cryptographic libraries like bcrypt or hash functions like SHA-256. The length of the generated string determines the size of the shortened URL. For example, a six-character key would result in a URL that's only 18 characters long (assuming ASCII characters).

🟢 Simple implementation, no need to store original URLs in the database, easy to generate keys.

🔴 Collision risk - there's a chance that two different URLs could produce the same random string, which would cause conflicts when trying to map them back to their respective original URLs. To mitigate this risk, longer strings are required, increasing the overall length of the shortened URL.

To reduce collision risks, one can use techniques like hashing the entire URL before generating the random string, ensuring that each URL will have a distinct representation even if its content changes slightly over time.

🗒️Incremental IDs: Another method is to assign an incrementing integer ID to each new URL being added to the system. The ID can then be converted into a shorter base-n number system (e.g., base36 uses digits 0-9 and letters A-Z) to create a shorter key.

🟢Simple implementation, deterministic, no collision risk once all possible combinations have been exhausted.

🔴Longer URLs may still not be very short, and the system must keep track of every single URL ever created, requiring significant storage space for the ID table. Additionally, if the system needs to support the deletion of URLs, managing those deleted entries becomes more complex since they still occupy valuable ID space.

🗒️Hash Functions: Using a well-designed hash function like SHA-256 or MD5 can provide both uniqueness and brevity. In this case, the output of the hash function serves as the key. Since hash outputs are usually much smaller than the input data, they make excellent candidates for shortening URLs. However, due to collisions, multiple URLs might produce identical hash values, so additional steps should be taken to ensure uniqueness.

🟢Hash functions are computationally efficient, making them suitable for large-scale systems. They also offer strong security properties.

🔴As mentioned earlier, hash collisions pose a challenge, necessitating the use of techniques like salted hashes or double hashing to increase the likelihood of uniqueness while maintaining a reasonable key length.

🗒️Prefixes and Suffixes: Instead of generating a completely random string, another option is to add prefixes or suffixes to the URL itself to create a shorter key. For instance, adding "tinyurl.com/" at the beginning and "/{short_key}" at the results in a shortened URL.

🟢No need to generate random strings, simpler implementation compared to other methods.

🔴Limited customizability, the potential for leaking information about the original URL through the prefix or suffix.

Interviewer: How would you ensure the availability and reliability of the service?

Interviewee: To ensure high availability, I would use multiple load balancers and multiple servers in different regions. This would allow the service to continue functioning even if one or more servers or regions are unavailable. Additionally, I would use multiple databases and employ database replication to ensure that the data is always available and consistent.

Interviewer: How would you handle security issues like malicious URL redirection and spamming?

Interviewee: To handle these issues, I would implement several security measures. For example, I would rate limit requests from a single IP address to prevent spamming.

I would also implement a mechanism to detect and block malicious URLs. Additionally, I would implement proper authentication and authorization to control access to the service and prevent unauthorized usage.

Interviewer: How would you handle the growth of the mapping data over time?

Interviewee: To handle the growth of the mapping data, I would use a distributed database system such as Cassandra or Amazon DynamoDB, which are designed to handle large amounts of data.

I would also implement horizontal scaling, where new servers can be added as the data grows, to ensure that the system can handle increasing amounts of traffic. Additionally, I would use data partitioning to distribute the data evenly across the servers and minimize the impact of hot spots.

Interviewer: Great, thanks for explaining that. Can you talk about the trade-offs between using a hash function and a database to store the mapping between the short and long URLs?